In this short blog post, I want to show you an idea where you take some very detailed datasets from a software project and transform it into a representation where management can reason about…

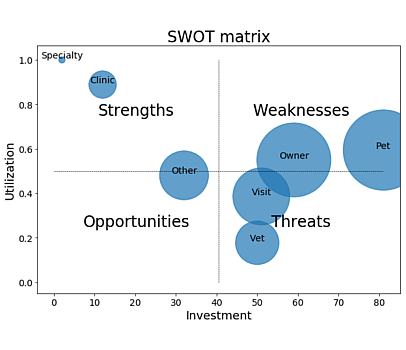

SWOT analysis for spotting worthless code

In this short blog post, I want to show you an idea where you take some very detailed datasets from a software project and transform it into a representation where management can reason about…

Knowing all about the software system we are developing is valuable, but too often a rare situation we are facing today. In times of software development experts shortages and stressful software projects, high turnover in teams leads quickly to lost knowledge about the source code…

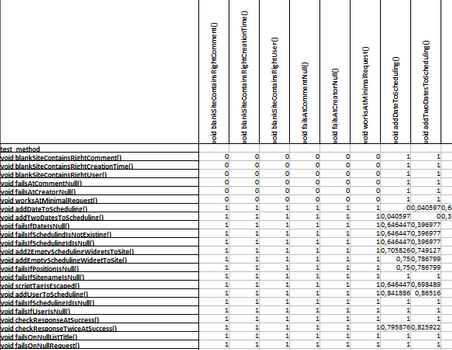

In this data analysis … we want to spot test cases that are structurally very similar and thus can be seen as duplicate. We’ll calculate the similarity between tests based on their invocations of production code…

The nice thing about reproducible data analysis (like I’m trying to do it here on my blog) is, well, that you can quickly reproduce or even replicated an analysis.

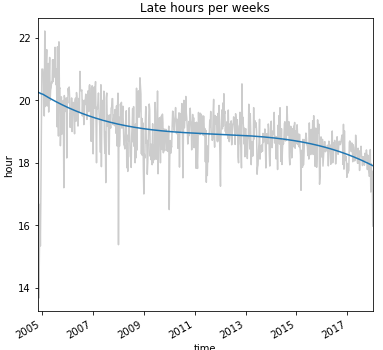

So, in this blog post/notebook, I transfer the analysis of “Developers’ Habits (IntelliJ Edition)” to another project: The famous open-source operating system Linux…

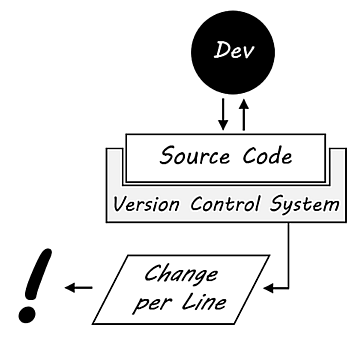

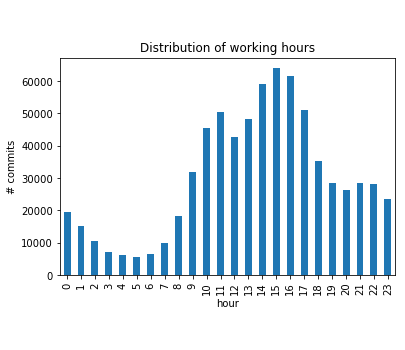

In this blog post / notebook, we want to take a look at how much information you can extract from a simple Git log output. We want to know where the developers come from, on which weekdays the developers don’t work, which developers are working on weekends, what the normal working hours are and if the is any sight of overtime periods…