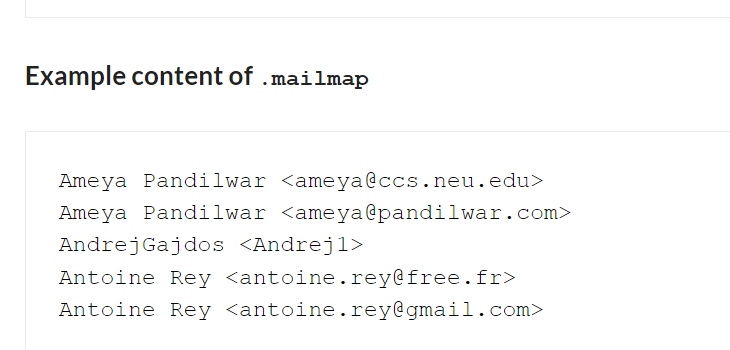

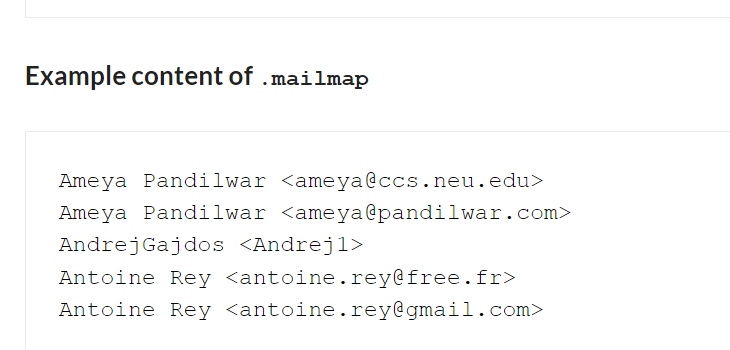

Here is an older cheat sheet I’ve found again with some git tricks to get data from source code repositories for data-driven software analytics or for getting some initial insights into a software development project.

Software Archaeology with Git

Here is an older cheat sheet I’ve found again with some git tricks to get data from source code repositories for data-driven software analytics or for getting some initial insights into a software development project.

I wrote a blog post about Defect Analysis, a analysis technique to get insights into how buggy your system might be.

I tried to create a little guide / cheat sheet that could support you during your own first experiments with Software Analytics.

I’ve started to gather some valuable information about Software Analytics in an awesome list. I hope to get more people interested in this fascinating topic. The resources should be as applicable in practice as possible.

People at conferences and meetups often ask me what I would recommend learning X or Y. And I’m always happy to give some suggestions depending on the experience level of the person that asked. Unfortunately, this doesn’t scale very much, so here are my general recommendations on learning something very effective. This time: Software Analytics.