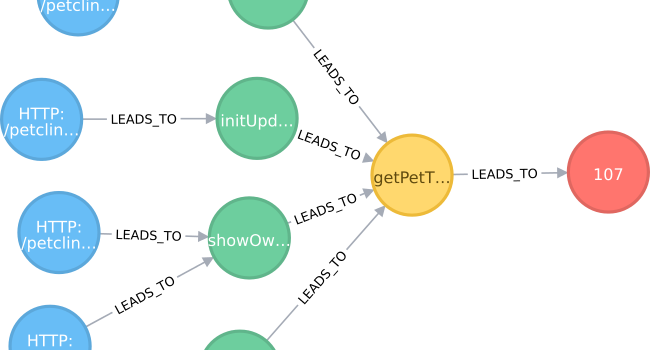

All the work before was just there to get a nice graph model that feels more natural. Now comes the analysis part: As mentioned in the introduction, we don’t only want the hotspots that signal that something awkward happened, but also

the trigger in our application of the hotspot combined with

the information about the entry point (e. g. where in our application does the problem happen) and

(optionally) the request that causes the problem (to be able to localize the problem)…